The far-right QAnon conspiracy theory is so sprawling, it’s hard to know where people join. Last week, it was 5G cell towers, this week it’s Wayfair; who knows what next week will bring? But QAnon’s followers always seem to begin their journey with the same refrain: “I’ve done my research.”

I’d heard that line before. In early 2001, the marketing for Steven Spielberg’s latest movie, A.I., had just begun. YouTube wouldn’t launch for another four years, so you had to be eagle-eyed to spot the unusual credit next to Haley Joel Osment, Jude Law, and Frances O’Connor: Jeanine Salla, the movie’s “Sentient Machine Therapist”.

Soon after, Ain’t It Cool News (AICN) posted a tip from a reader:

Type her name in the Google.com search engine, and see what sites pop up…pretty cool stuff! Keep up the good work, Harry!! –ClaviusBase

(Yes, in 2001 Google was so new you had to spell out its web address.)

The Google results began with Jeanine Salla’s homepage but led to a whole network of fictional sites. Some were futuristic versions of police websites or lifestyle magazines; others were inscrutable online stores and hacked blogs. A couple were in German and Japanese. In all, over twenty sites and phone numbers were listed.

By the end of the day, the websites racked up 25 million hits, all from a single AICN article suggesting readers ‘do their research’. It later emerged they were part of the first-ever alternate reality game (ARG), The Beast, developed by Microsoft to promote Spielberg’s movie.

The way I’ve described it here, The Beast sounds like enormous fun. Who wouldn’t be intrigued by a doorway into 2142 filled with websites and phone numbers and puzzles, with runaway robots who need your help and even live events around the world? But consider how much work it required to understand the story and it begins to sound less like “watching TV” fun and more like “painstaking research” fun. Along with tracking dozens of websites that updated in real time, you had to solve lute tablature puzzles, decode base 64 messages, reconstruct 3D models of island chains that spelt out messages, and gather clues from newspaper and TV adverts across the US.

This purposeful yet bewildering complexity is the complete opposite of what many associate with conventional popular entertainment, where every bump in your road to enjoyment has been smoothed away in the pursuit of instant engagement and maximal profit. But there’s always been another kind of entertainment that appeals to different people at different times, one that rewards active discovery, the drawing of connections between clues, the delicious sensation of a hunch that pays off after hours or days of work. Puzzle books, murder mysteries, adventure games, escape rooms, even scientific research – they all aim for the same spot.

What was new in The Beast and the ARGs that followed it was less the specific puzzles and stories they incorporated, but the sheer scale of the worlds they realised – so vast and fast-moving that no individual could hope to comprehend them. Instead, players were forced to co-operate, sharing discoveries and solutions, exchanging ideas, and creating resources for others to follow. I’d know: I wrote a novel-length walkthrough of The Beast when I was meant to be studying for my degree at Cambridge.

QAnon is not an ARG. It’s a dangerous conspiracy theory, and there are lots of ways of understanding conspiracy theories without ARGs. But QAnon pushes the same buttons that ARGs do, whether by intention or by coincidence. In both cases, “do your research” leads curious onlookers to a cornucopia of brain-tingling information.

In other words, maybe QAnon is… fun?

ARGs never made it big. They came too early and It’s hard to charge for a game that you stumble into through a Google search. But maybe their purposely-fragmented, internet-native, community-based form of storytelling and puzzle-solving was just biding its time…

This blog post expands on the ideas in my Twitter thread about QAnon and ARGs, and incorporates many of the valuable replies. Please note, however, that I’m not a QAnon expert and I’m not a scholar of conspiracy theories. I’m not even the first to compare QAnon to LARPs and ARGs.

But my experience as lead designer of Perplex City, one of the world’s most popular and longest-running ARGs, gives me a special perspective on QAnon’s game-like nature. My background as a neuroscientist and experimental psychologist also gives me insight into what motivates people.

Today, I run Six to Start, best known for Zombies, Run!, an audio-based augmented reality game with half a million active players, and I’m writing a book about the perils and promise of gamification.

It’s Like We Did It On Purpose

When I was designing Perplex City, I loved sketching out new story arcs. I’d create intricate chains of information and clues for players to uncover, colour-coding for different websites and characters. There was a knack to having enough parallel strands of investigation going on so that players didn’t feel railroaded, but not so many that they were overwhelmed. It was a particular pleasure to have seemingly unconnected arcs intersect after weeks or months.

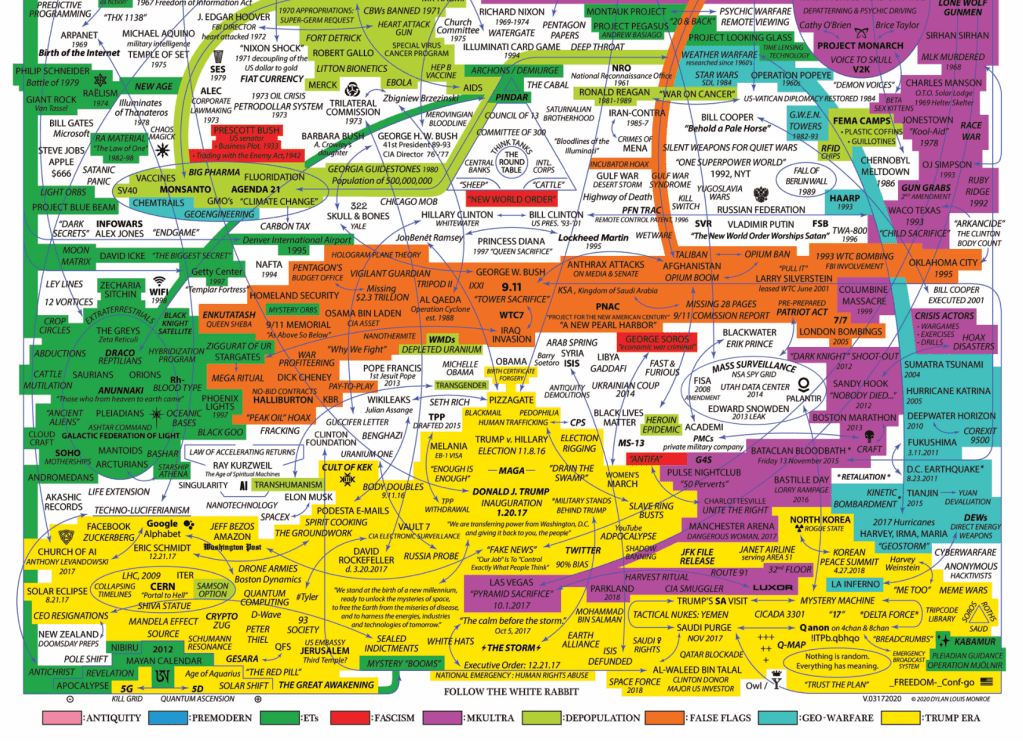

No-one would mistake the clean lines of my flowcharts for the snarl of links that makes up a QAnon theory, but the principles are similar: one discovery leading to the next. Of course, these two flowcharts are very different beasts. The QAnon one is an imaginary, retrospective description of supposedly-connected data, while mine is a prescriptive network of events I would design.

Except that’s not quite true. In reality, Perplex City players didn’t always solve our puzzles as quickly as we intended them to, or they became convinced their incorrect solution was correct, or embarrassingly, our puzzles were broken and had no solution at all. In those cases we had to rewrite the story on the fly.

When this happens in most media, you just hold up your hands and say you made a mistake. In videogames, you can issue an online update and hope no-one’s the wiser. But in ARGs, a public correction would shatter the uniquely-prolonged collective suspension of disbelief in the story. This was thought to be so integral to the appeal of ARGs, it was termed TINAG, or “This is Not a Game”.

So when we messed up in Perplex City, we tried mightily to avoid editing websites, a sure sign this was, in fact, a game. Instead, we’d fix it by adding new storylines and writing through the problem (it helped to have a crack team of writers and designers, including Naomi Alderman, Andrea Phillips, David Varela, Dan Hon, Jey Biddulph, Fi Silk, Eric Harshbarger, and many many others).

We had a saying when these diversions worked out especially well: “It’s like we did it on purpose.”

Every ARG designer can tell a similar war story. Here’s Josh Fialkov, writer for the Lonelygirl15 ARG/show:

Our fans/viewers would build elaborate (and pretty neat) theories and stories around the stories we’d already put together and then we’d merge them into our narrative, which would then engage them more.

The one I think about the most is we were shooting something on location and we’re run and gunning. We fucked up and our local set PA ended up in the background of a long selfie shot. We had no idea. It was 100% a screw up. The fans became convinced the character was in danger. And then later when that character revealed herself as part of the evil conspiracy — that footage was part of the audiences proof that she was working with the bad guys all along — “THATS why he was in the background!”

They literally found a mistake – made it a story point. And used it as evidence of their own foresight into the ending — despite it being, again, us totally being exhausted and sloppy. And at the time hundreds of thousands of people were participating and contributing to a fictional universe and creating strands upon strands.

Conspiracy theories and cults evince the same insouciance when confronted with inconsistencies or falsified predictions; they can always explain away errors with new stories and theories. What’s special about QAnon and ARGs is that these errors can be fixed almost instantly, before doubt or ridicule can set in. And what’s really special about QAnon is how it’s absorbed all other conspiracy theories to become a kind of ur-conspiracy theory such that seems pointless to call out inconsistencies. In any case, who would you even be calling out when so many QAnon theories come from followers rather than “Q”?

Yet the line between creator and player in ARGs has also long been blurry. That tip from “ClaviusBase” to AICN that catapulted The Beast to massive mainstream coverage? The designers more or less admitted it came from them. Indeed, there’s a grand tradition of ARG “puppetmasters” (an actual term used by devotees) sneaking out from “behind the curtain” (ditto) to create “sockpuppet accounts” in community forums to seed clues, provide solutions, and generally chivvy players along the paths they so carefully designed.

As an ARG designer, I used to take a hard line against this kind of cheating but in the years since, I’ve mellowed somewhat, mostly because it can make the game more fun, and ultimately, because everyone expects it these days. That’s not the case with QAnon.

Yes, anyone who uses 4chan and 8chan understands that anonymity is baked into the system such that posters frequently create entire threads where they argue against themselves in the guise of anonymous users who are impossible to distinguish or trace back to a single individual – but do the more casual QAnon followers know that?

Local Fame

Pop culture’s conspiracy theorist sits in a dark basement stringing together photos and newspaper clippings on their crazy wall. On the few occasions this leads to useful results, it’s an unenviable pursuit. Anyone choosing such an existence tends to be shunned by society.

But this ignores one gaping fact: piecing together theories is really satisfying. Writing my walkthrough for The Beast was rewarding and meaningful, appreciated by an enthusiastic community in a way that my molecular biology essays most certainly were not. Anne Helen Peterson found the same pleasure extends to QAnon devotees:

Was interviewing a QAnon guy the other day who told me just how deeply pleasurable it is for him to analyze/write his “stories” after his kids go to sleep on Q Drops

Online communities have long been dismissed as inferior in every way to “real” friendships, an attenuated version that’s better than nothing, but not something that anyone should choose. Yet ARGs and QAnon (and games and fandom and so many other things) demonstrate there’s an immediacy and scale and relevance to online communities that can be more potent and rewarding than a neighbourhood bake sale. This won’t be news to most of you, but I think it’s still news to decision-makers in traditional media and politics.

Good ARGs are deliberately designed with puzzles and challenges that require unusual talents – I designed one puzzle that required a good understanding of ancient Egyptian hieroglyphs – with problems so large that they require crowdsourcing to solve, such that all players feel like welcome and valued contributors.

Needless to say, that feeling is missing from many people’s lives:

ARGs are generally a showcase for special talent that often goes unrecognized elsewhere. I have met so many wildly talented people wth weird knowledge through them.

If you’re first to solve a puzzle or make a connection, you can attain local fame in ARG communities, as Dan Hon notes . The vast online communities for TV shows like Lost and Westworld, with their purposefully convoluted mystery box plots, also reward those who guess twists early, or produce helpful explainer videos. Yes, the reward is “just” internet points in the form of Reddit upvotes, but the feeling of being appreciated is very real. It’s no coincidence that Lost and Westworld both used ARGs to promote their shows.

Wherever you have depth in storytelling or content or mechanics, you’ll find the same kind of online communities. Games like Bloodborne, Minecraft, Stardew Valley, Dwarf Fortress, Animal Crossing, Eve Online, and Elite Dangerous, they all share the same race for discovery. These discoveries eventually become processed into explainer videos and Reddit posts that are more accessible for wider audiences.

The same has happened with modern ARGs, where explainer videos have become so compelling they rack up more views than the ARGs have players (not unlike Twitch). Michael Andersen of ARGN is a fan of this trend, but wonders about its downside – with reference to conspiracy theorists:

I am usually a massive proponent for consuming things like alternate reality games as passive, lean-back media: what McLuhan referred to as “cool media”. However, engaging that way strips out one of its most important lessons, about the power and limits of the crowd.

See, when you’re reading (or watching) a summary of an ARG? All of the assumptions and logical leaps have been wrapped up and packaged for you, tied up with a nice little bow. Everything makes sense, and you can see how it all flows together.

Living it, though? Sheer chaos. Wild conjectures and theories flying left and right, with circumstantial evidence and speculation ruling the day. Things exist in a fugue state of being simultaneously true-and-not-true, and it’s only the accumulation of evidence that resolves it. And acquiring a “knack” for sifting through theories to surface what’s believable is an extremely valuable skill – both for actively playing ARGs, and for life in general.

And sometimes, I worry that when people consume these neatly packaged theories that show all the pieces coming together, they miss out on all those false starts and coincidences that help develop critical thinking skills. …because yes, conspiracy theories try and offer up those same neat packages that attempt to explain the seemingly unexplained. And it’s pretty damn important to learn how groups can be led astray in search of those neatly wrapped packages.

“SPEC”

I’m a big fan of the SCP collaborative-fiction project. Its top-rated stories rank among the best science fiction and horror I’ve read. A few years ago, I wrote my own (very silly) story, SCP-3993, where New York’s ubiquitous LinkNYC internet kiosks are cover for a mysterious reality-altering invasion.

Like the rest of SCP, this was all in good fun, but I recently discovered LinkNYC is tangled up in QAnon conspiracy theories. To be fair, you can say the same thing about pretty much every modern technology, but it’s not surprising their monolith-like presence caught conspiracy theorists’ attention as it did mine:

You now have this linkNYC system; they seem to be a replacement for public phone-booths. Those things look pretty creepy.

It’s not unreasonable to be creeped out by LinkNYC. In 2016, the New York Civil Liberties Union wrote to the mayor about “the vast amount of private information retained by the LinkNYC system and the lack of robust language in the privacy policy protecting users against unwarranted government surveillance.” Two years later, kiosks along Third Avenue in Midtown mysteriously blasted out a slowed-down version of the Mister Softee theme song. So there’s at least some cause for speculation. The problem is when speculation hardens into reality.

Not long after the AICN post, The Beast’s players set up a Yahoo Group mailing list called Cloudmakers, named after a boat in the story. As the number of posts rose to dozens and then hundreds per day, it became obvious to list moderators (including me) that some form of organisation was in order. One rule we established was that posts should include a prefix in their subject so members could easily distinguish website updates from puzzle solutions.

My favourite prefix was “SPEC”, a catch-all for any kind of unfounded speculation, most of which was fun nonsense but some of which ended up being true. There were no limits on what or how much you could post, but you always had to use the prefix so people could ignore it. Other moderated communities have similar guidelines, with rationalists using their typically long-winded “epistemic status” metadata.

Absent this kind of moderation, speculation ends up overwhelming communities since it’s far easier and more fun to bullshit than do actual research. And if speculation is repeated enough times, if it’s finessed enough, it can harden into accepted fact, leading to devastating and even fatal consequences.

I’ve personally been the subject of this process thanks to my work in ARGs – not just once, but twice.

The first occasion was fairly innocent. One of our more famous Perplex City puzzles, Billion to One, was a photo of a man. That’s it. The challenge was to find him. Obviously, we were riffing on the whole “six degrees of separation” concept. Some thought it’d be easy, but I was less convinced. Sure enough, fourteen years on, the puzzle is still unsolved, but not for lack of trying. Every so often, the internet rediscovers the puzzle amid a flurry of YouTube videos and podcasts; I can tell whenever this happens because people start DMing me on Twitter and Instagram.

A clue in the puzzle is the man’s name, Satoshi. It is not a rare name, and it happens to be same as the presumed pseudonymous person or persons who developed bitcoin, Satoshi Nakamoto. So of course people think Perplex City’s Satoshi created bitcoin. Not a lot of people, to be fair, but enough that I get DMs about it every week. But it’s all pretty innocent, like I said.

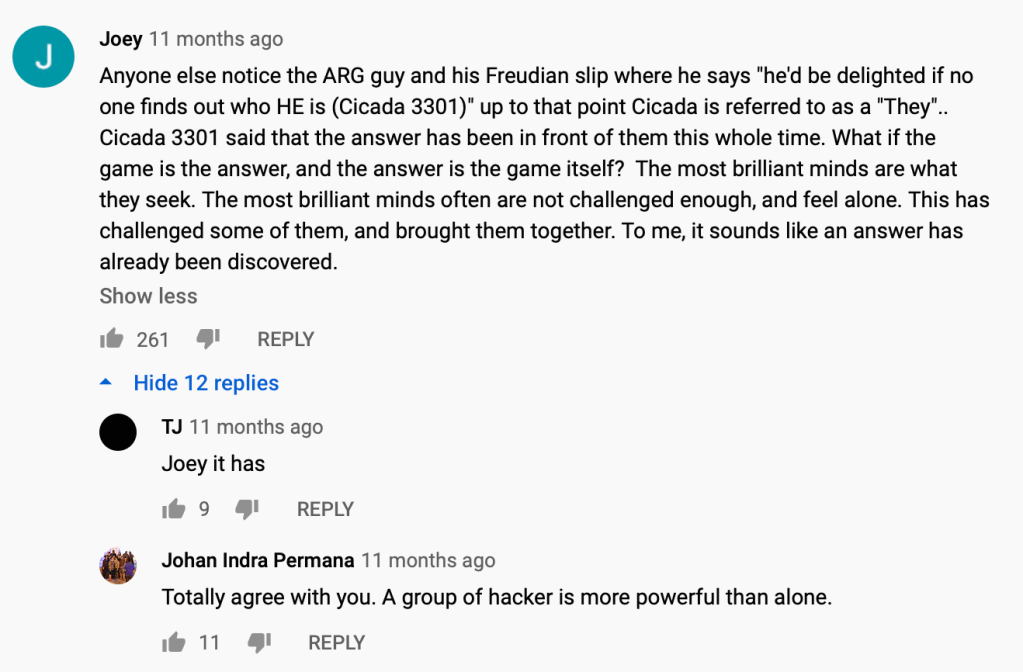

More concerning is my presumed connection to Cicada 3301, a mysterious group that recruited codebreakers through very difficult online puzzles. Back in 2011, my company developed a pseudo-ARG for the BBC Two factual series, The Code, all about mathematics. This involved planting clues into the show itself, along with online educational games and a treasure hunt.

To illustrate the concept of prime numbers, The Code explored the gestation period of cicadas. We had no hand in the writing of the show; we got the script and developed our ARG around it. But this was enough to create a brand new conspiracy theory, featuring yours truly:

My bit starts around 20 minutes in:

Interviewer: Why [did you make a puzzle about] cicadas? Me: Cicadas are known for having a gestation period which is linked to prime numbers. Prime numbers are at the heart of nature and the heart of mathematics. Interviewer: That puzzle comes out in June 2011. Me: Yeah. Interviewer: Six months later, Cicada 3301 makes its international debut. Me: It's a big coincidence. Interviewer: There are some people who have brought up the fact that whoever's behind Cicada 3301 would have to be a very accomplished game maker. Me: Sure. Interviewer: You would be a candidate to be that person. Me: That's true, I mean, Cicada 3301 has a lot in common with the games we've made. I think that one big difference (chuckles) is that normally when we make alternate reality games, we do it for money. And it's not so clear to understand where the funding for Cicada 3301 is coming from.

Clearly this was all just in fun – I knew it and the interviewer knew it. That’s why I agreed to take part. But does everyone watching this understand that? There’s no “SPEC” tag on the video. At least a few commenters are taking it seriously:

I’m not worried, but I’d be lying if I wasn’t a touch concerned that Cicada 3301 now lies squarely in the QAnon vortex and in the “Q-web“:

My defence that the cicada puzzle in The Code was “a big coincidence” (albeit delivered with an unfortunate shit-eating grin) didn’t hold water. In the conspiracy theorest mindset, no such thing exists:

According to Michael Barkun, emeritus professor of political science at Syracuse University, three core principles characterize most conspiracy theories. Firstly, the belief that nothing happens by accident or coincidence. Secondly, that nothing is as it seems: The “appearance of innocence” is to be suspected. Finally, the belief that everything is connected through a hidden pattern.

These are helpful beliefs when playing an ARG or watching a TV show designed with twists and turns. It’s fun to speculate and to join seemingly disparate ideas, especially when the creators encourage and reward this behaviour. It’s less helpful when conspiracy theorists “yes, and…” each other into shooting up a pizza parlour or burning down 5G cell towers.

Because there is no coherent QAnon community in the same sense as the Cloudmakers, there’s no convention of “SPEC” tags. In their absence, YouTube has added annotated QAnon videos with links to its Wikipedia article, and Twitter has banned 7,000 accounts and restricted 150,000 more, among other actions. Supposedly, Facebook is planning to do the same.

These are useful steps but will not stop QAnon from spreading in social media comments or private chat groups or unmoderated forums. It’s not something we can reasonably hope for, and I don’t think there’s any technological solution (e.g. browser extensions) either. The only way to stop people from mistaking speculation from fact is for them to want to stop.

Cryptic

It’s always nice to have a few mysteries for players to speculate on in an ARG, if only because it helps them pass the time while the poor puppetmasters scramble to sate their insatiable demand for more website updates and puzzles. A good mystery can keep a community guessing for, as Lost did with its numbers or Game of Thrones with Jon Snow’s parentage. But these mysteries always have to be balanced against specifics, lest the whole story dissolve into a puddle of mush; for as much we derided Lost for the underwhelming conclusion to its mysteries, no-one would’ve watched in the first place if the episode-to-episode storytelling wasn’t so strong.

The downside of being too mysterious in Perplex City is that cryptic messages often led players on wild goose chases such that they completely ignored entire story arcs in favour of pursuing their own theories. This was bad for us because we had a pretty strict timetable that we needed our story to play out on, pinned against the release of our physical puzzle cards that funded the entire enterprise. If players took too long to find the $200,000 treasure at the conclusion of the story, we might run out of money.

QAnon can favour cryptic messages because, as far as I know, they don’t have a specific timeline or goal in mind, let alone a production budget or paid staff. Not only is there no harm in followers misinterpreting messages, but it’s a strength: followers can occupy themselves with their own spin-off theories far better than “Q” can. Dan Hon notes:

For every ARG I’ve been involved in and ones my friends have been involved in, communities always consume/complete/burn through content faster than you can make it, when you’re doing a narrative-based game. This content generation/consumption/playing asymmetry is, I think, just a fact. But QAnon “solved” it by being able to co-opt all content that already exists and … encourages and allows you to create new content that counts and is fair play in-the-game.

But even QAnon needs some specificity, hence their frequent references to actual people, places, events, and so on.

A brief aside on designing very hard puzzles

It was useful to be cryptic when I needed to control the speed at which players solved especially consequential puzzles, like the one revealing where our $200,000 treasure was buried. For story and marketing purposes, we wanted players to be able to find it as soon as they had access to all 256 puzzle cards, which we released in three waves. We also wanted players to feel like they were making progress before they had all the cards and we didn’t want them to find the location the minute they had the last card.

My answer was to represent the location as the solution to multiple cryptic puzzles. One puzzle referred to the Jurassic strata in the UK, which I split across the background of 14 cards. Another began with a microdot revealing which order to arrange triple letters I’d hidden on a bunch of cards. By performing mod arithmetic on the letter/number values, you would arrive at 1, 2, 3 or 4, corresponding to the four DNA nucleotides. If you understood the triplets as codons for amino acids, they became letters. These letters led you to the phrase “Duke of Burgundy”, the name of a butterfly whose location, when combined with the Jurassic strata, would help you narrow down the location of the treasure.

The nice thing about this convoluted sequence is that we could provide additional online clues to help the players community when they got stuck. The point being, you can’t make an easy puzzle harder, but you can make a hard puzzle easier.

Beyond ARGs

It can feel crass to compare ARGs to a conspiracy theory that’s caused so much harm. But this reveals the crucial difference between them: in QAnon, the stakes so high, any action is justified. If you truly believe an online store or a pizza parlour is engaging in child trafficking and the authorities are complicit, extreme behaviour is justified.

Gabriel Roth extends this idea:

What QAnon has that ARGs didn’t have is the claim of factual truth; in that sense it reminds me of the Bullshit Anecdotal Memoir wave of the ’90s and early ’00s. If you have a story based on real life, but you want to make it more interesting, the correct thing to do is change the names of the people and make it as interesting as you like and call it fiction.

The insight of the Bullshit Anecdotal Memoirists (I’m thinking of James Frey and Augusten Burroughs and David Sedaris) was that you could call it nonfiction and readers would like it much better because it would have the claim of actual factual truth, wowee!! And it worked!

How much more engaging and addictive is an immersive, participatory ARG when it adds that unique frisson you can only get with the claim of factual truth? And bear in mind that ARG-scale stories aren’t about mere personal experiences—they operate on a world-historical scale.

ARGs’ playfulness with the truth and their sometimes-imperceptible winking of This Is Not A Game (c.f. accusations Lonelygirl15 was a hoax) is only the most modern incarnation of epistolary storytelling. In that context, immersive and realistic stories have long elicited extreme reactions, like the panic incited by Orson Welles’ The War of the Worlds (often exaggerated, to be fair).

We don’t have to wonder what happens when an ARG community meets a matter of life and death. Not long after The Beast concluded, the 9/11 attacks happened. A small number of posters in the Cloudmakers mailing list suggested the community use its skills to “solve” the question of who was behind the attack.

The brief but intense discussion that ensued has become a cautionary tale of ARG communities getting carried away and being unable to distinguish fiction from reality. In reality, the community and the moderators quickly shut down the idea as being impractical, insensitive, and very dangerous. “Cloudmakers tried to solve 9/11” is a great story, but it’s completely false.

Unfortunately, the same isn’t true for the poster child for online sleuthing gone wrong, the r/findbostonbombers subreddit. There’s a parallel between the essentially unmoderated, anonymous theorists of r/findbostonbombers and those in QAnon: neither feel any responsibility for spreading unsupported speculation as fact. What they do feel is that anything should be solvable, as Laura Hall describes:

There’s a general sense of, “This should be solveable/findable/etc” that you see in lots of reddit communities for unsolved mysteries and so on. The feeling that all information is available online, that reality and truth must be captured/in evidence somewhere

There’s truth in that feeling. There is a vast amount of information online, and sometimes it is possible to solve “mysteries”, which makes it hard to criticise people for trying, especially when it comes to stopping perceived injustices. But it’s the sheer volume of information online that makes it so easy and so tempting and so fun to draw spurious connections.

That joy of solving and connecting and sharing and communication can do great things, and it can do awful things. As Josh Fialkov, writer for Lonelygirl15, says:

That brain power negatively focused on what [conspiracy theorists] perceive as life and death (but is actually crassly manipulated paranoia) scares the living shit out of me.

What ARGs Can Teach Us

Can we make “good ARGs”? Could ARGs inoculate people against conspiracy theories like QAnon?

The short answer is: No. When it comes to games that are educational and fun, you usually have to pick one, not both – and I say that as someone who thinks he’s done a decent job at making “serious games” over the years. That doesn’t mean it’s impossible, but it’s really hard, and I doubt any such ARG would get played by the right audience anyway.

The long answer: I’m writing a book about the perils and promise of gamification. Come back in a year or two.

For now, here’s a medium-sized answer. No ARG can heal the deep mistrust and fear and economic and spiritual malaise that underlies QAnon and other dangerous conspiracy theories, any more than a book or a movie can solve racism. There are hints at ARG-like things that could work, though – not in directly combatting QAnon’s appeal, but in channeling people’s energy and zeal of community-based problem-solving toward better causes.

Take The COVID Tracking Project, an attempt to compile the most complete data available about COVID-19 in the US. Every day, volunteers collect the latest numbers on tests, cases, hospitalizations, and patient outcomes from every state and territory. In the absence of reliable governmental figures, it’s become one of the best sources not just in the US, but in the world.

It’s also incredibly transparent. You can drill down into the raw data volunteers have collected on Google Sheets, view every line of code written on Github, and ask them questions on Slack. Errors and ambiguities in the data are quickly disclosed and explained rather than hidden or ignored. There’s something game-like in the daily quest to collect the best-quality data and to continually expand and improve the metrics being tracked. And like in the best ARGs, volunteers of all backgrounds and skills are welcomed. It’s one of the most impressive and well-organising reporting projects I’ve ever seen; “crowdsourcing” doesn’t even come close to describing its scale.

If you applied ARG skills to investigative journalism, you’d get something like Bellingcat, an an open-source intelligence group that discovered how Malaysia Airlines Flight 17 (MH17) was shot down over Ukraine in 2014. Bellingcat’s volunteers painstakingly pieced together publicly-available information to determine MH17 was downed by a Buk missile launcher originating from the 53rd Anti-Aircraft Rocket Brigade in Kursk, Russia. The Dutch-led international joint investigation team later came to the same conclusion.

Conspiracy theories thrive in the absence of trust. Today, people don’t trust authorities because authorities have repeatedly shown themselves to be unworthy of trust – misreporting or manipulating COVID-19 testing figures, delaying the publication of government investigations, burning records of past atrocities, and deploying unmarked federal forces. Perhaps authorities were just as untrustworthy twenty or fifty or a hundred years ago, but today we rightly expect more.

Mattathias Schwartz believe it’s that lack of trust that leads people to QAnon:

Q’s [followers] … are starving for information. Their willingness to chase bread crumbs is a symptom of ignorance and powerlessness. There may be something to their belief that the machinery of the state is inaccessible to the people. It’s hard to blame them for resorting to fantasy and esotericism, after all, when accurate information about the government’s current activities is so easily concealed and so woefully incomplete.

So the goal cannot be to simply restore trust in existing authorities. Rather, I think it’s to restore faith in truth and knowledge itself. The COVID Tracking Project and Bellingcat help reveal truth by crowdsourcing information. They show their work via hypertext and open data, creating a structure upon which higher-level analysis and journalism can be built. And if they can’t find the truth, they’re willing to say so.

QAnon seems just as open. Everything is online. Every discussion, every idea, every theory is all joined together in a warped edifice where speculation becomes fact and fact leads to action. It’s thrilling to discover, and as you find new terms to Google and new threads to pull upon, you can feel just like a real researcher. And you can never get bored. There’s always new information to make sense of, always a new puzzle to solve, always a new enemy to take down.

QAnon fills the void of information that states have created – not with facts, but with fantasy. If we don’t want QAnon to fill that void, someone else has to. Government institutions can’t be relied upon to do this sustainably, given how underfunded and politicised they’ve become in recent years. Traditional journalism has also struggled against its own challenges of opacity and lack of resources. So maybe that someone is… us.

ARGs teach us that the search for knowledge and truth can be immensely rewarding, not in spite of their deliberately-fractured stories and near-impossible puzzles, but because of them. They teach us that communities can self-organise and self-moderate to take on immense challenges in a responsible way. And they teach us that people are ready and willing to volunteer to work if they’re welcomed, no matter their talent.

It’s hard to create these communities. They rely on software and tools that aren’t always free or easy to use. They need volunteers who have spare time to give and moderators who can be supported, financially and emotionally, through the struggles that always come. These communities already exist. They just need more help.

Despite the growing shadow of QAnon, I’m hopeful for the future. The beauty of ARGs and ARG-like communities isn’t their power to discover truth. It’s how they make the process of discovery so deeply rewarding.

Follow me @adrianhon on Twitter.

Want to know when my book on gamification is coming out? Subscribe to my very-rarely updated newsletter!