If you’ve been paying attention to the big tech headlines recently, you’ll have noticed the same trend as I have. Apple Watch. Microsoft HoloLens. Magic Leap. Wearable computing is on everyone’s minds (and arms, and faces). But all these people getting excited about their glasses and digital crowns are late to the party. We’ve all been part of an invisible wearable tech revolution without even knowing it.

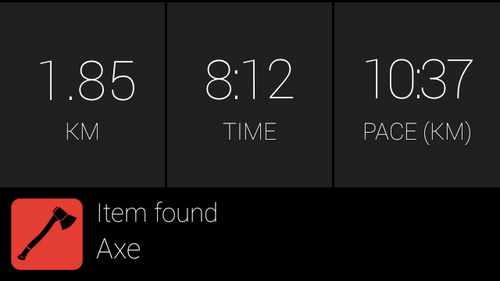

Does this sound familiar? You strap your phone to your arm, pop your earbuds in and head out for your run. GPS tracks your location, the phone’s accelerometer and gyro sensors give you detailed stats about your elevation and split times, you hear mile markers and pace updates and maybe even zombies behind you, your phone vibrates when it needs your attention — and a dozen other functions besides. I’d bet a decent number of you have this experience multiple times a week. And you know what? That sounds a hell of a lot like wearable computing to me.

So, ignore all those people waving their shiny plastics in your face on stage and telling you its the future of wearable tech. That future’s already here, and fitness gaming is a huge part of it. Let’s have a look.

Back in 2008, users of Nike+ and Runkeeper treated the iPhone as a wearable computer. The value of having audio updates of your run, plus a GPS trace and other stats afterwards, far outweighed the inconvenience of strapping on a phone or buying a separate GPS device.

When we designed Zombies, Run! in 2011, it was this added value that we were thinking about: the capability to provide a richly interactive, location-aware running experience. We knew that runners already wore headphones for music, so we made audio our primary mode of output (fitting nicely with our expertise in audio production and storytelling) rather than making people look at the screen.

We also knew that GPS and accelerometer data was just about good enough to serve as an input method. That meant we could see whether users were outrunning our virtual zombies or not. Again, this worked better than making people touch the screen.

That said, without screen-based interaction, we felt we couldn’t make Zombies, Run! truly location-sensitive. We couldn’t reliably direct people to run to precise locations (GPS was and still isn’t fast/accurate enough, particularly for the level of safety we need), or give them frequent gameplay or story choices, or let them see the position of zombies relative to themselves.

Other apps, in fact, have tried these things, and I think the reason they failed is for the simple reason that no-one wants to run while looking at a handheld screen. The truth is, there have been precious few successful games for wearable computers.

Smartwatches

Will the Apple Watch change things? Probably not — at least, not yet.

Firstly, Apple’s WatchKit SDK is extremely limited. It’s not at all designed for games. While I’m sure we’ll see some, such as Nimblebit’s proposed Letterpad or things like the wrist-games of yore, I find it hard to believe that people will prefer them over more fully-featured games on smartphones. After all, there’s a reason why phones are getting bigger, rather than smaller.

Regardless of the adoption rate of smartwatches, I see no reason to believe that they’ll host successes on the scale of Angry Birds or Clash of Clans or Monument Valley. Even as a peripheral, it’s hard to see smartwatches becoming popular enough that game developers would be willing to rely on them, other than for specialised games (look at what happened with the Wii and Kinect) or for basic notificiations and choices (“Trade 20 wood for 5 gold? YES/NO”).

Apple’s own Human Interface Guidelines make their views very clear:

If you measure interactions with your iOS app in minutes, you can expect interactions with your WatchKit app to be measured in seconds. So interactions should be brief and interfaces should be simple.

Seconds. In other words, they don’t recommend developers make lengthy experiences.

And let’s not forget the lackadaisical sales of Android Wear thus far: the required Android Wear app has been downloaded fewer than a million times, resulting in brutally steep discounting.

In time, smartwatches will grow in capability and in battery life. They’ll eventually gain independent cellular connectivity, they’ll get cheaper, and they’ll get better. But there are some important strengths and weaknesses to bear in mind.

- Smartwatches provide an always-viewable screen that you don’t have to hold in your hands but that screen is very small and hard to focus on while walking, let alone running (and if you’re not moving, why not use your phone?). As far as fitness or urban games go, making people squint at markers on a map means forcing them to stop running every 30 seconds.

- Vibration and ‘taptic’ alerts may become a great way of conveying simple information without a screen although the taps may end up being confused with other notifications, and developers may never receive full control of the vibration engine.

- Touchscreens provide far more input choices than a phone strapped to your arm (i.e. more than zero) but the touch targets are small and hard to hit while moving.

- Hardware buttons and controls, giving a simpler but eyes-free input method but developer access is mixed, e.g. there is no realistic way to use the Apple Watch’s ‘digital crown’ to control third party apps other than scrolling a page.

- Built-in accelerometers, gyros, barometers, and heart rate monitors — crucially, in a known body position (the wrist) promise wonderful new health and fitness applications, including the ability to recognise gestures and movement when developers receive full access.

The good news is that many of these weaknesses will resolve themselves over the next five years in the same way that smartphones, and in particular the iPhone, steadily increased the access and power available to developers.

Heads-Up Displays

We developed a special version of Zombies, Run! for Google Glass in 2014. It was an interesting exercise, worthwhile for the PR value alone. However, it was not particularly useful from an R&D perspective, other than confirming that the device was nowhere near ready for primetime, Google’s marketing notwithstanding. Why?

- It’s really hard to see a little screen while you’re running. It’s bouncing up and down all the time, it’s only in your right eye, plus your eyes have to at focus at infinity to resolve the screen; not fun when you’re also trying to watch your footing at the same time.

- It’s not weatherproofed.

- The hardware and software was halfbaked.

- The voice and touch input was unreliable, unintuitive, and embarrassing.

Glass’ one redeeming feature was that I felt so self-conscious wearing it that I ran significantly faster to avoid people staring at me.

The Future

I know it doesn’t sound like it, but in the long term, I’m very optimistic about both smartwatches and heads-up displays, and here’s why.

I see wearable computers less as single devices than as personal area networks; collections of devices including a phone, a watch, headphones/heads-up displays, that can operate independently or in concert. At their fullest powers, they could open up a whole new world of multiplayer urban and physical gaming; games that rely just as much on bluffing and body language as they do on physical strength.

And yet… it’s not easy to miniaturise processors and displays and batteries to the point where they can comfortably fit on our bodies. It’ll be a few years until we understand what these devices are best used for.

So: let’s not get carried away.

Thanks to Matt Wieteska for his input to this piece.